Security Patch Coordination: Why the Technical Fix Is the Easy Part

Matthew Holmes

May 5, 2026 · // 8 min read

A critical vulnerability drops on Tuesday morning.

You have 72 hours to patch 120 microservices before it hits HackerNews.

The fix is one line of code. The coordination is three days of chaos.

Patching a CVE across 120 microservices takes 72 hours not because the fix is hard, but because the coordination is. The technical work finishes Tuesday afternoon. Getting every team to merge their PR finishes Friday, if you’re lucky. The bottleneck is not patching capability. It is the manual follow-up, escalation, and status tracking that consumes platform teams during every critical security incident. Teams that reach 100% coverage inside 72 hours have one thing in common: they automated the coordination layer, not just the PR creation.

The Security Patch Time Bomb

What security teams see:

- CVE published: 9am Tuesday

- Severity: Critical (CVSS 9.8)

- Exploit in the wild: Yes

- Fix available: Yes

- Time to patch: Hours

What platform teams experience:

- Identify affected services: 2 hours

- Generate fixes: 30 minutes

- Create 120 PRs: 1 hour

- Get them all merged: 3 days (if you’re lucky)

The technical work is done by Tuesday afternoon.

The coordination work runs through Friday.

Why Security Patches Are Different From Normal Dependency Updates

Normal dependency update:

- Low urgency

- Teams can review at leisure

- Merge timeline: Flexible

Security patch:

- Extreme urgency

- Teams must review immediately

- Merge timeline: Hours not days

The coordination challenge is 10x harder because the timeline is 10x shorter.

The Merge Rate Problem

You created 120 PRs on Tuesday.

Wednesday morning status:

- 35 merged (29%)

- 45 in review (38%)

- 40 untouched (33%)

Wednesday evening status:

- 68 merged (57%)

- 32 in review (27%)

- 20 untouched (17%)

Thursday morning (48 hours after CVE):

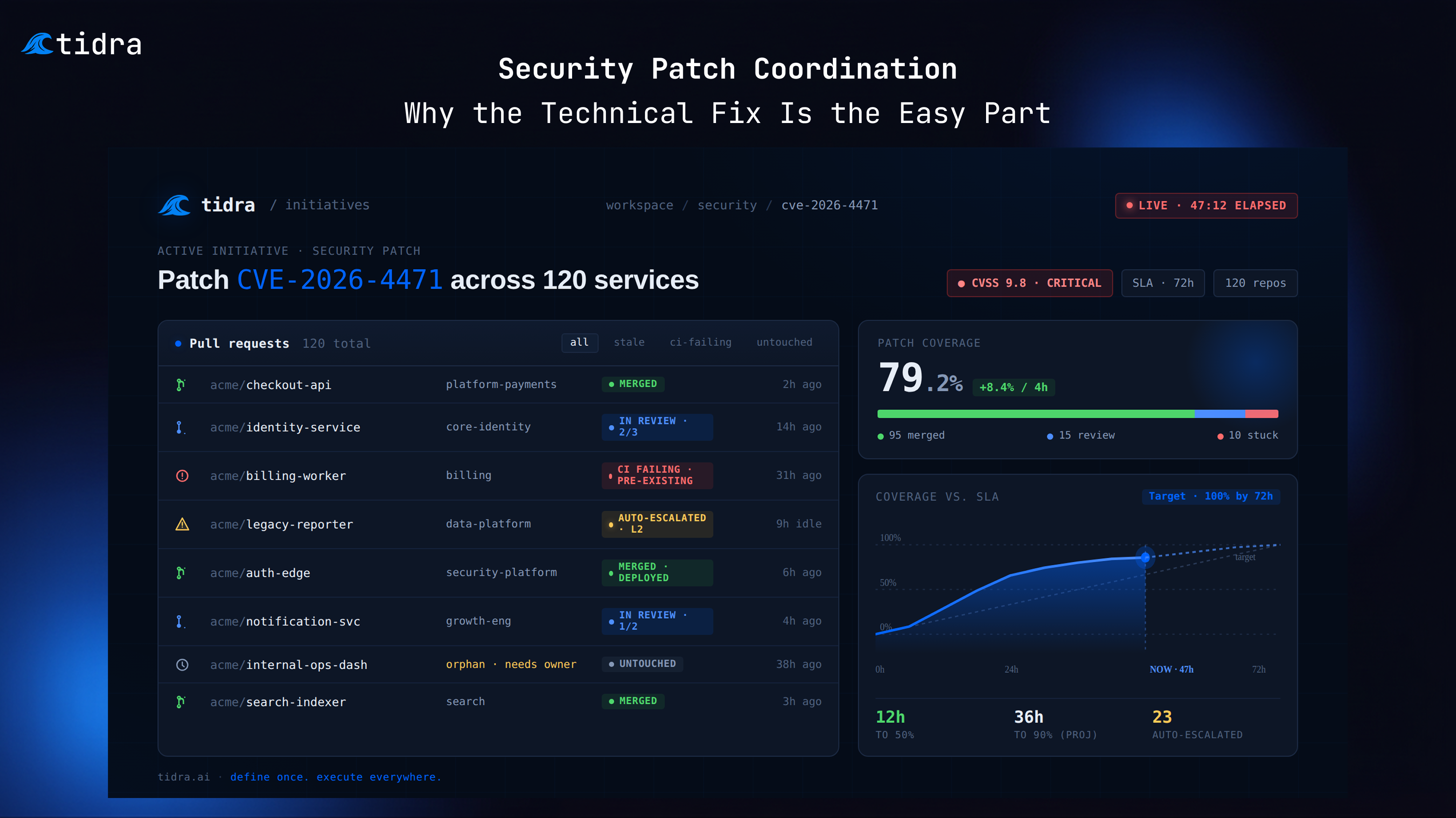

- 95 merged (79%)

- 15 in review (12%)

- 10 untouched (8%)

You need 100% coverage. You’re at 79%. The exploit is actively being used.

Now what?

Why Do the Last 20% of Security PRs Take the Longest?

The first 80% of PRs merge in 48 hours.

The last 20% take another 72 hours.

The stragglers are:

- Legacy services nobody maintains

- Teams on vacation

- Services with complex CI that’s currently broken

- Teams that “don’t check GitHub notifications”

- Repos owned by teams that no longer exist

These are the services that get exploited.

The Escalation Cascade

Hour 0: Critical CVE announced

Hour 2: Platform team creates 120 PRs, sends notifications

Hour 24: 35% merged. Teams reviewing.

Hour 36: 60% merged. Send reminders to stragglers.

Hour 48: 79% merged.

- Start escalating untouched PRs

- DM team leads directly

- Flag in engineering management Slack

Hour 60: 88% merged.

- Escalate remaining to VPs

- Security team demanding ETA for 100%

- You’re now in meetings instead of coordinating

Hour 72: 94% merged.

- CTO wants status report

- Considering emergency maintenance window

- 6 services still unpatched

This is where platform teams burn out. The coordination escalations exceed the technical work by 20x.

How to Patch CVEs Across a Large Codebase Quickly

Good security coordination is not faster manual work. It is automated coordination that removes humans from the notification, escalation, and status-tracking loop entirely.

Hour 0–2: Immediate Response

- Identify affected services automatically

- Generate fixes with AI assistance

- Create PRs with clear security context

- Send urgent notifications to all teams

Hour 4–12: Active Monitoring

- Real-time dashboard shows merge progress

- Automated reminders every 4 hours to teams with open PRs

- Surface blockers immediately (CI failures, merge conflicts)

- Prioritize by service criticality

Hour 12–24: Escalation Automation

- Auto-escalate PRs open >8 hours to team leads

- Generate status report for engineering managers

- Identify orphaned services needing emergency owners

- Flag services in production vs. staging

Hour 24–48: Completion Push

- Direct engagement with remaining teams

- Emergency merge approval process for critical services

- Coordinate hotfix deployments

- Verify patches in production

Automation handles the coordination. Humans handle the technical and political challenges. Every change still ships as a reviewable PR. Nothing merges without engineer approval.

The Communication Challenge

During a security incident, you’re answering the same questions 50 times:

“What’s the impact?” Explain CVE severity, exploit status, affected services

“How many services are vulnerable?” Check spreadsheet, count services, maybe it’s out of date

“Which teams haven’t patched yet?” Manually check GitHub, correlate with team ownership

“What’s blocking the stragglers?” Chase down each team individually to find out

“When will we be at 100%?” Pure guesswork based on current velocity

Each answer requires 10 minutes of investigation. You’re answering questions instead of coordinating.

The Priority Triage Problem

Not all 120 services carry equal risk:

Critical (30 services):

- Public-facing APIs

- Handle customer data

- High traffic

- Must patch: <24 hours

High (50 services):

- Internal services

- Limited exposure

- Important but not customer-facing

- Must patch: <48 hours

Medium (40 services):

- Internal tools

- Low traffic

- Minimal data access

- Must patch: <72 hours

Without automated tracking by priority tier, this becomes spreadsheet work during the worst possible window.

The CI Failure Cascade

Security patches often trigger test failures:

Service A: Tests assume old behavior, break with patch

Service B: Integration test depends on Service A, now failing

Service C: End-to-end test covers A→B→C flow, completely broken

Now you’re debugging test failures across 15 services while trying to get 120 PRs merged.

The coordination question:

- Which failures are test issues vs. real problems?

- Who should fix them?

- Can we merge and fix tests later?

- Which services are blocking others?

This is where coordination breaks down. You’re firefighting test failures instead of tracking merge progress.

The Merge Conflict Explosion

Security patches often touch the same files:

Tuesday 10am: Create 120 PRs

Wednesday 9am: 40 PRs merged to main

Wednesday 10am: 60 remaining PRs now have merge conflicts

Teams must now pull latest main, resolve conflicts, re-test, re-push, and wait for CI again. Each conflict adds 30–60 minutes. With 60 conflicts, that is 30–60 hours of additional work.

Better approach: Batch conflicts. Resolve all at once. Re-push to all PRs simultaneously.

The Coverage Verification Problem

All PRs merged. Are you done?

No.

You need to verify:

- All services actually deployed the patch

- Production is running patched versions

- No rollbacks happened

- Monitoring shows expected behavior

This is the verification phase. Often forgotten in the chaos.

How to Measure CVE Remediation Speed

Time to 50% coverage: How fast did half the services patch?

Time to 90% coverage: How fast did you get to near-complete?

Time to 100% coverage: How long for full coverage?

Targets that indicate working coordination:

- 50% in 12 hours

- 90% in 36 hours

- 100% in 72 hours

Numbers that indicate coordination is the constraint:

- 50% in 36 hours

- 90% in 5 days

- 100% in 2 weeks

If your time-to-100% is measured in weeks, patching capability is not the problem. Organizations that patch CVEs across hundreds of repos within 72 hours have automated the coordination layer. Those that take weeks have not.

The Automation Stack for Security Patching Across Multiple Repos

Most security incidents aren’t failed by the platform team that created the PRs. They’re failed by the gap between “PRs created” and “PRs deployed and verified.” That gap is coordination, escalation, and status tracking — none of which is solved by a faster PR generation tool.

We built Tidra to close that gap. Not just the PR creation — the real-time merge tracking, the automatic escalation when a PR goes stale at hour 8, the CI failure surfacing that tells you whether a test broke because of the patch or because it was already broken. Every change is still a reviewable PR. Nothing ships without an engineer approving it. The automation is in the coordination layer, not the merge button.

Detection:

- Monitor CVE databases

- Scan dependencies automatically

- Identify affected services instantly

Remediation:

- Generate fixes with AI assistance

- Create PRs with security context

- Run tests automatically

Coordination:

- Track merge status in real-time

- Escalate stale PRs at hour 8 automatically

- Surface CI failures and distinguish patch failures from pre-existing breaks

- Send targeted reminders, not broadcast noise

Verification:

- Check deployment status

- Verify running versions

- Monitor for issues

- Report coverage

The Bottom Line

Security patches fail not because of technical challenges.

They fail because coordination breaks down.

The technical fix is one line of code. Getting 120 teams to merge it in 72 hours is not a patching problem. It is a coordination problem. Teams that reach 100% coverage within the response window have automated the reminders, escalations, and status tracking that consume platform engineers during every incident. Teams that rely on manual follow-up hit 94% by Friday and explain to the CTO why 6 services are still exposed.

The question every security leader should be asking after an incident: how many of those 72 hours did your platform team spend writing Slack messages?

If your last CVE took longer than 72 hours to reach 100% coverage, the bottleneck wasn’t your engineers’ ability to merge. It was everything that had to happen to get them to open the PR.

See how Tidra coordinates security patches at scale

Book a Demo